Lecture 7: Classification II: Evaluation and Tuning

DSCI 100

Housekeeping

- Your midterm is THIS WEEK!

- Closed-book Canvas quiz

- Requires Lock Down Browser (LDB). Ensure this is installed prior to your midterm and test it out using the practice quiz.

Today: Unanswered Questions from Last Class

Is our model any good? How do we evaluate it?

How do we choose

kin K-nearest neighbours classification?

How to measure classifier performance?

Accuracy

\[Accuracy = \dfrac{\#\; correct\; predictions}{\#\; total\; predictions}\]

Notes:

Confusion matrix

Here is an example of confusion matrix with cancer diagnosis data we’ve seen before.

| Truly Malignant | Truly Benign | |

|---|---|---|

| Predicted Malignant | 1 | 4 |

| Predicted Benign | 3 | 57 |

Notes:

Confusion matrix

Typically we consider one of the class labels as “positive” - in this case the “Malignant” status is more interesting to researchers, hence we consider that label as “positive”.

Relabeling the above confusion matrix:

| Truly Positive | Truly Negative | |

|---|---|---|

| Predicted Positive | 1 | 4 |

| Predicted Negative | 3 | 57 |

Note that:

Top left cell = # correct positive predictions.

Top row = # total positive predictions.

Left column = # truly positive observations.

Notes:

Precision and Recall

\[ {Precision} = \dfrac{{\#\; correct\; positive\; predictions}}{{\#\; total\; positive\; predictions}} \]

\[ {Recall} = \dfrac{{\#\; correct\; positive\; predictions}}{{\#\; total\; truly\; positive\; observations}} \]

Recall:

| Truly Positive | Truly Negative | |

|---|---|---|

| Predicted Positive | 1 | 4 |

| Predicted Negative | 3 | 57 |

Here, precision = 1/(1+4) and recall = 1/(1+3).

Notes:

Which Metric is More Important?

It is application/context dependent. Use your judgement/logical reasoning.

For example:

a 99% accuracy on cancer prediction may not be very useful. Why?

If we need patients with truly malignant cancer to be diagnosed correctly, what metric should we prioritize?

What if a classifier never guess positive except for the very few observations it is super confident in? What metric is affected?

Notes:

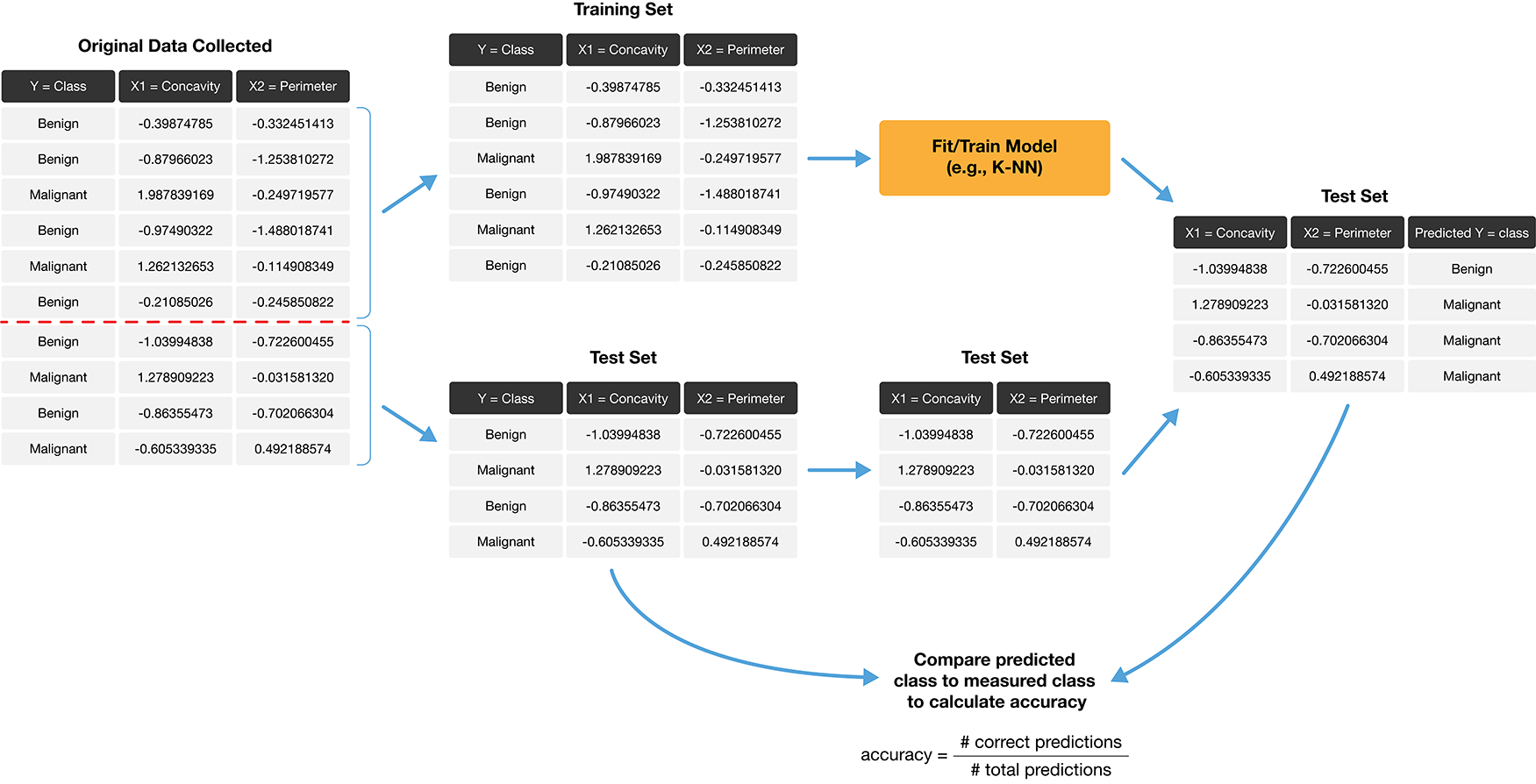

Adding evaluation to the pipeline for building a classifier

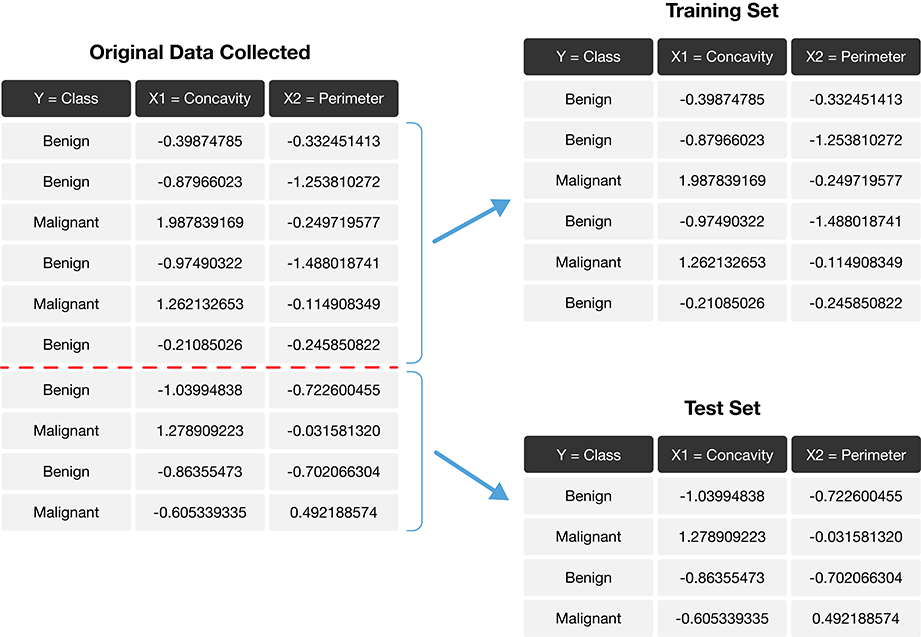

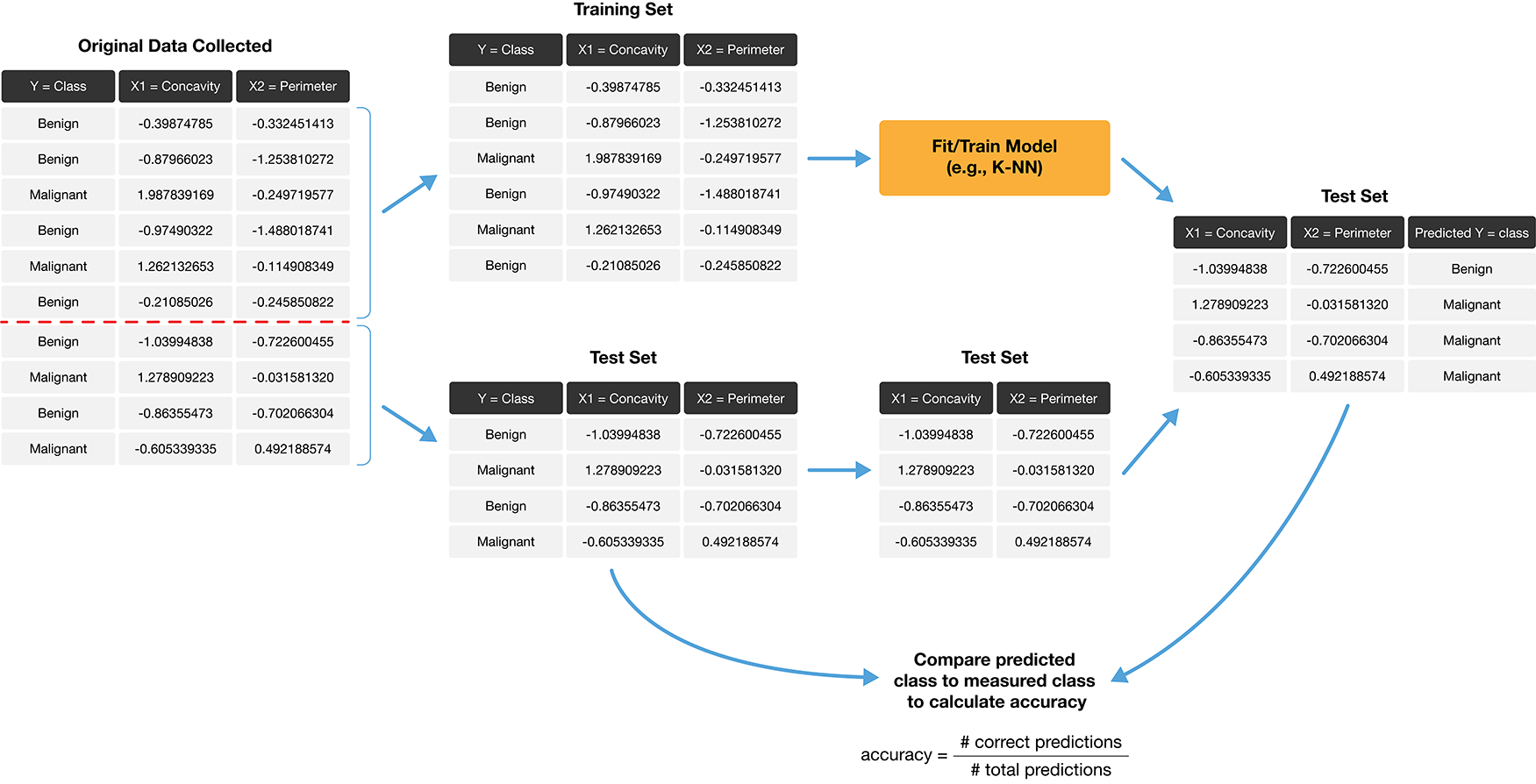

To add evaluation into our classification pipeline, we:

- Split our data into two subsets: training data and testing data.

- Build the model & choose K using training data only (sometimes called tuning)

- Compute performance metrics (accuracy, precision, recall, etc.) by predicting labels on testing data only

We’ll now talk about each step individually.

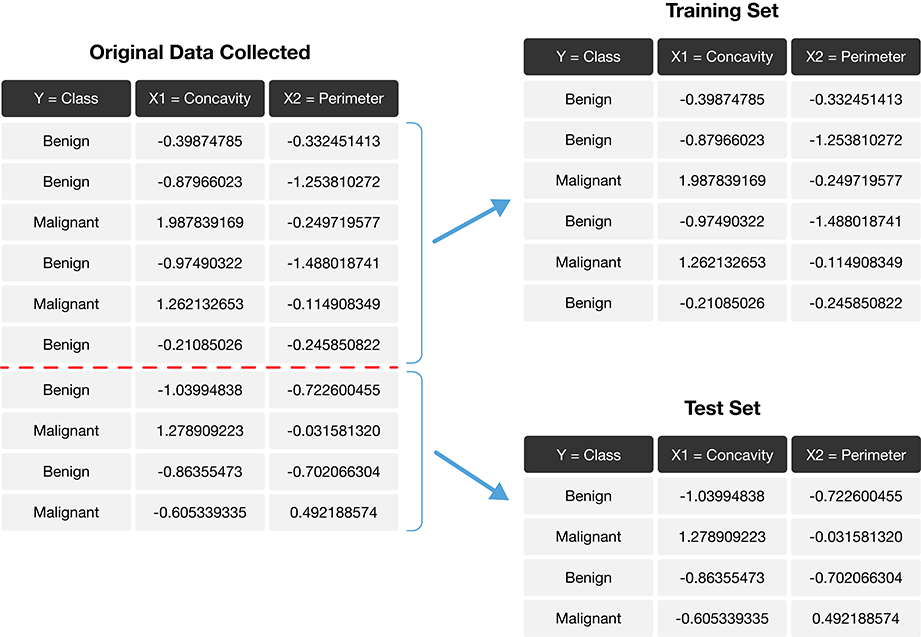

Step 1. Split our data into two subsets

Notes:

Step 1. Split our data into two subsets

The role of the training and test sets

Notes:

Golden Rule of Machine Learning / Data Science

Don’t use your testing data to train your model!

Why?

Showing your classifier the labels of evaluation data is like cheating on a test; it’ll look more accurate than it really is

- “training your model” includes choosing K, choosing predictors, choosing the model, scaling/centering variables, etc!

Notes:

How to split data

There are two important things to do when splitting data.

- Shuffling: randomly reorder the data before splitting

- Stratification: make sure the two split subsets of data have roughly equal proportions of the different labels

Why?

Notes:

(tidymodels thankfully automatically does both of these things)

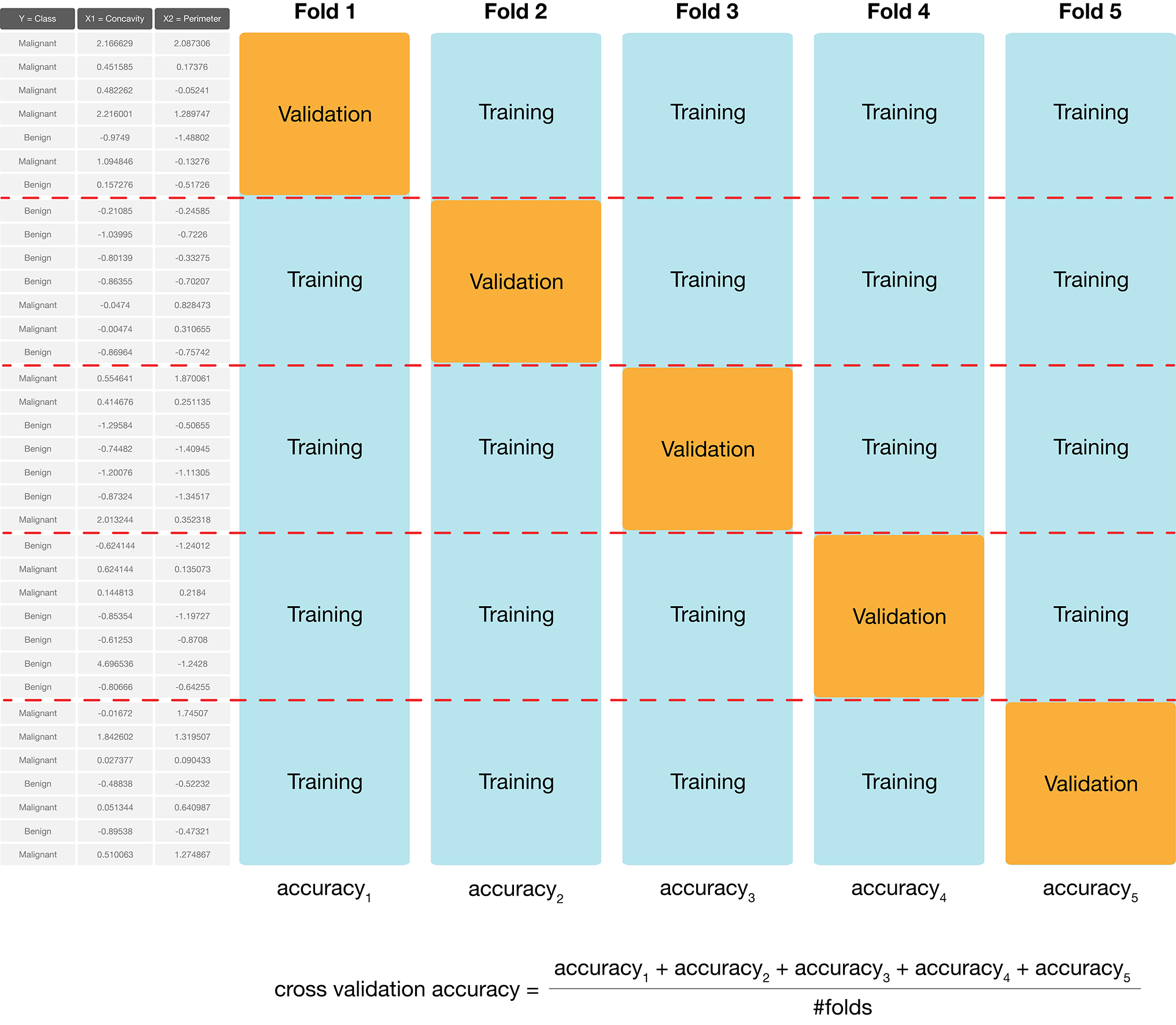

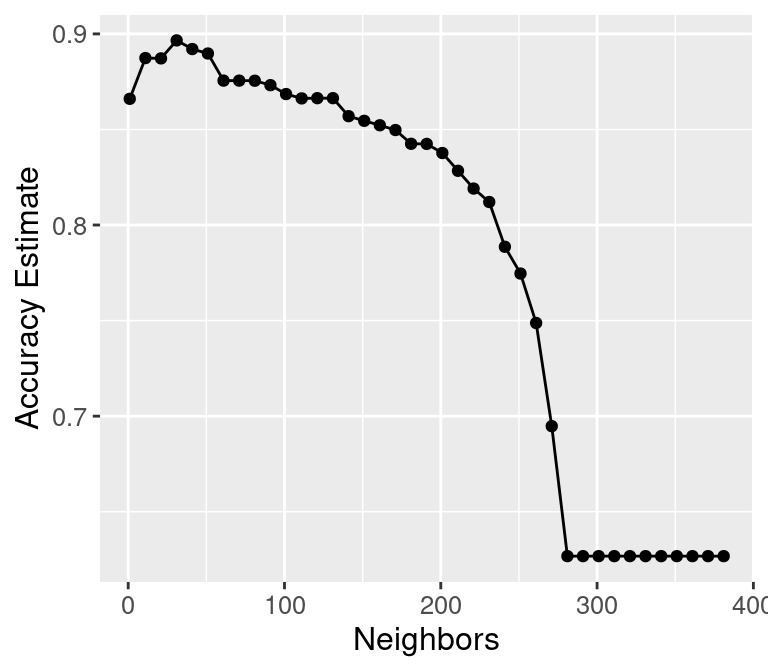

Step 2: Choosing K (or, “tuning’’ the model)

Choosing K is part of training. We want to choose K to maximize performance, but: - we can’t use test data to evaluate performance (cheating!) - we can’t use training data to evaluate performance (that’s what we trained with, so poor evaluation of true performance)

Solution: Split the training data further into training data and validation data sets

2a. Choose some candidate values of K

2b. Split the training data into two sets - one called the training set, another called the validation set

2c. For each K, train the model using training set only

2d. Evaluate accuracy (and/or other metrics of performance) for each using validation set only

2e. Pick the K that maximizes validation performance

But what if we get a bad training set? Just by chance?

Notes:

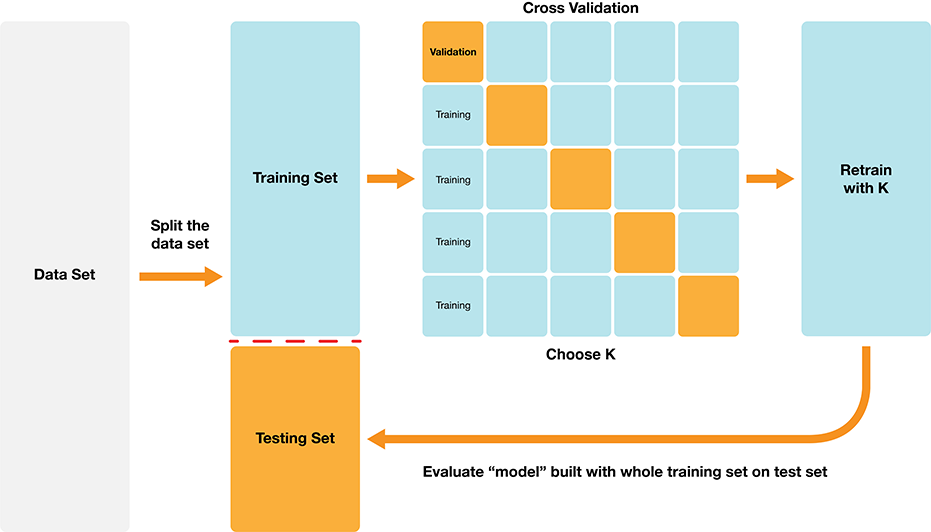

Cross-Validation

We can get a better estimate of performance by splitting multiple ways and averaging. Here’s an example:

Notes:

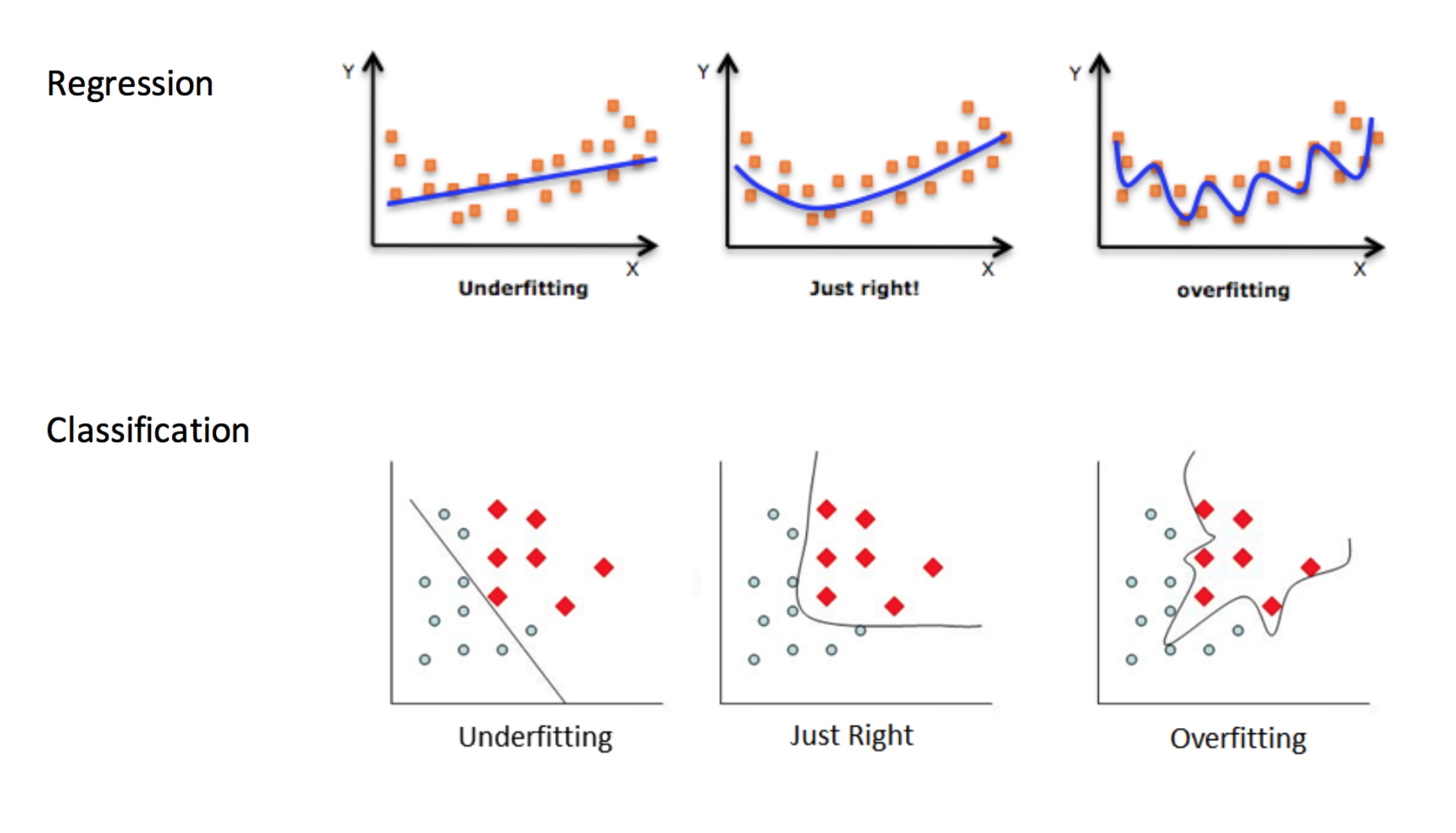

Underfitting & Overfitting

Overfitting: when your model is too sensitive to your training data; noise can influence predictions!

Underfitting: when your model isn’t sensitive enough to training data; useful information is ignored!

Source: http://kerckhoffs.schaathun.net/FPIA/Slides/09OF.pdf

Notes:

Underfitting & Overfitting

Which of these are under-, over-, and good fits?

Notes:

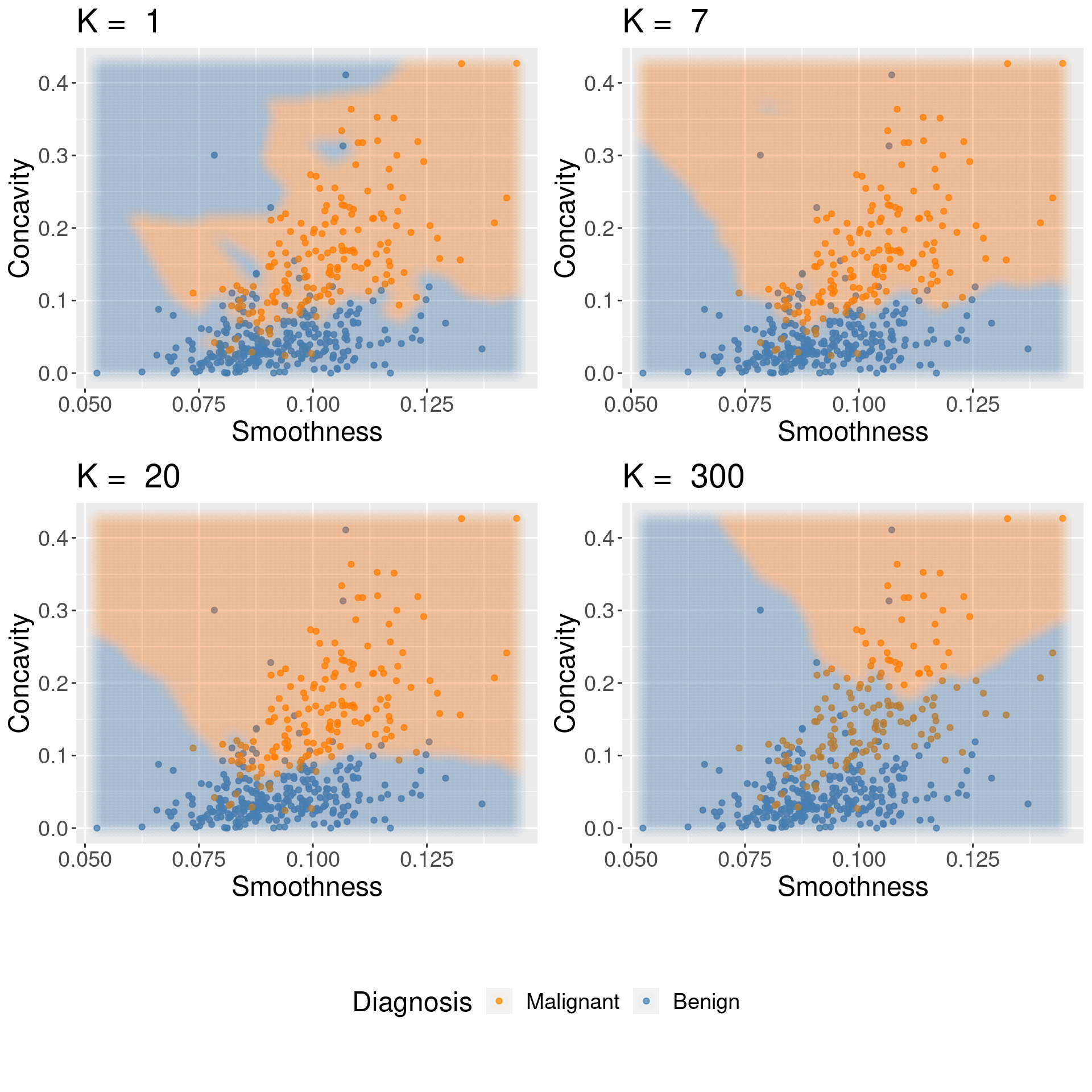

Underfitting & Overfitting

For KNN: small K overfits, large K underfits, and both cause lower accuracy

Notes:

Step 3: Compute metrics of performance

IMPORTANT: We compute the metrics by predicting labels on testing data only

Recall the role of the test data

Notes:

The Big Picture

Notes:

Worksheet Time! Go for it!

Let’s finish our iClicker problems and start on this week’s worksheet.

There is no tutorial this week.